Here’s the hard truth: AI assistants don’t “find” you, they select you. If ChatGPT, Gemini, Copilot, or Perplexity can’t justify your page as a safe, authoritative source, you never make the cut.

Want citations? Engineer your content so models have to pick you or risk invisibility in AI search.

Getting cited by AI is not the same game as ranking in Google. Traditional SEO still matters, but generative engines weigh authority signals, structured data, freshness, and extractable formats differently.

Multiple large-scale studies now show that AI platforms cite domains with clear entity trust, tight information architecture, and modular content blocks that are easy to quote. We’ll use those patterns to build your playbook.

If you’re building a moat (brand + links + structure), use white-hat authority strategies that compound.

And if you operate in competitive industries (SaaS, fintech, legal), you’ll need vertical-specific topical authority.

Key Takeaways

- Engineer extractability: short answers, bullets, tables, and FAQ schema.

- Show real freshness: updated data, dated changelogs, and faster pages.

- Reinforce entity trust: authors, org schema, credible mentions, editorial links.

- Measure like CRO: track citation rate by engine and iterate monthly.

What is an AI Citation

An AI citation is when a model (ChatGPT, Gemini, Copilot, Perplexity) names or links your page as support for its answer. This is the new distribution layer.

Nail it, and you become the default source consistently. Ready to architect for selection?

AI platforms now front-load answers and tuck links into expandable sections or sidebars. Your brand is seen only if it’s cited.

Research across millions of AI results shows models blend retrieval (live web or curated indices) with training priors, then attribute to sources that look safest: strong entities, clean structure, and current updates.

Translation: engineer your pages for extraction and trust, not just rankings.

- What counts as a citation? A visible source link in an AI answer (inline, footnote, or “sources” module), or an explicit brand/domain mention.

- Where do citations show? Google AI Overviews, Edge/Copilot answers, Perplexity cards, ChatGPT with browsing, and countless enterprise assistants.

- Who gets picked? Sites with strong entity signals (author/org), schema, freshness, and extractable formats (tables, lists, FAQs).

The Shift in Distribution (What changes vs. SEO?)

AI doesn’t return ten blue links; it synthesizes and justifies with a handful of sources. Two consequences:

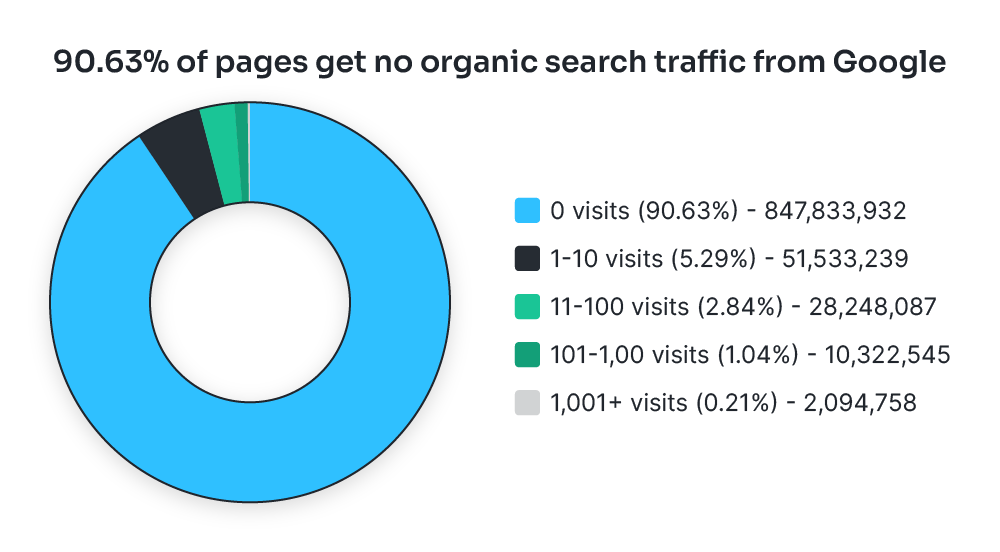

Competition compresses from page-one SERPs to 2–6 cited slots. Models often diverge from Google’s top results especially outside Perplexity so a pure-SEO strategy misses surface area.

Key differences you need to internalize now:

- Overlap is limited. On average, only a minority of AI-cited URLs are also top-10 in Google; Perplexity is the exception with higher overlap.

- Entity > exact match. Authority and identity consistency tend to beat keyword density.

- Extractability wins. Models prefer content with modular, quotable units.

Table: How major AI engines tend to cite

| Platform | Typical Citation Placement | Bias Tendencies (observed) | Overlap with Google Top 10 | Notable Notes |

| ChatGPT (w/ browsing) | Footnotes or “sources” at end | Often favors high-authority domains & reference hubs | Lower than Perplexity | Source sets vary by browsing provider & recency windows. |

| Google AI Overviews | Inline cards beneath answer | Heavier crossover with Google index | Mixed; some studies show partial alignment | Retrieval heavily influenced by Google’s ranking + freshness. |

| Perplexity | Prominent source cards | Higher SERP overlap vs. others | Highest of the group in one study | Strong emphasis on recent, reputable sources. |

| Copilot | Collapsed answer w/ sources | Leans Microsoft ecosystem + authority sites | Not well-published | Placement affects click propensity. (Inference from pattern reviews) |

Note: Patterns change quickly; multiple researchers report citation drift of up to ~60% month over month across platforms. Design for agility.

Checklist: Are you citation-ready today?

- Does each key page have Article/HowTo/FAQ schema?

- Is author/entity data consistent across your site and knowledge panels?

- Are your most linkable assets updated in the last 90 days?

- Do priority pages use scannable blocks (tables, bullets, TL;DRs)?

- Can your pages load under 2 seconds on mobile? (Speed matters for crawl + UX.)

Where Links Fit

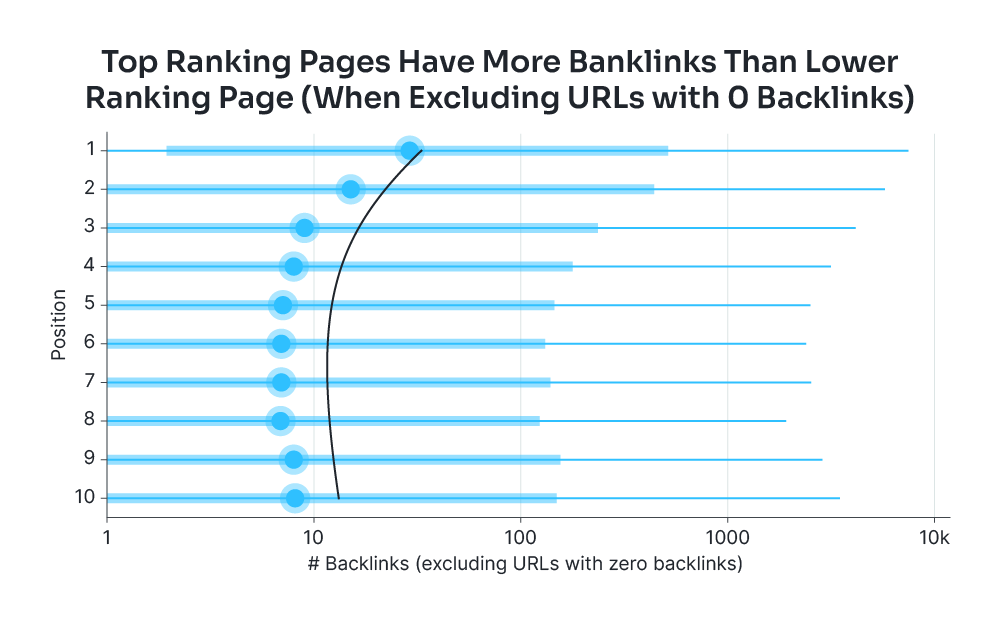

Backlinks remain a trust accelerant. Studies of AI citation sets show models lean toward established, referenced entities, which usually correlates with strong link profiles even when rankings don’t fully overlap with citations.

Operating in enterprise or regulated niches? Your content needs category-level authority and tight brand governance (authors, compliance, claims).

What Drives AI Citations

AI citations are selection events driven by entity trust, structured signals, freshness, and extractability. Engines use retrieval + ranking to assemble “safe” sources.

If your page isn’t easy to parse, verify, and attribute, it won’t be picked no matter how “good” it reads. Let’s unpack the mechanics.

Behind every attribution is a pipeline: retrieve → rank → synthesize → justify.

Retrieval sources differ (Google index, Bing index, proprietary crawls, partner data), but the ranking layer tends to elevate recognized entities with clear structure and recent updates.

Multiple analyses show partial alignment with SEO rankings, yet notable divergence especially in ChatGPT/Gemini meaning you can’t rely solely on SERP position.

You must win on-page structure and entity trust to be in the candidate set consistently.

- Entity signals: consistent org/author profiles, About/Contact pages, and linked knowledge sources.

- Structure: schema (Article, FAQ, HowTo), semantic HTML, scannable blocks.

- Freshness: visible update cadence and current data.

- Extractability: quotable snippets, tables, and definitions that stand alone.

Inputs models reward

How AI Engines Choose What to Cite

LLMs cite pages that feel like “safe bets”: strong entity signals, clean structure, fresh updates, and information that’s easy to extract. Ranking helps, but it’s not destiny.

Build for retrieval and attribution, not just keywords and you’ll start showing up in the sources. Ready?

AI assistants run a pipeline: retrieve → rank → synthesize → justify. Retrieval pulls candidates from their index (or the open web).

Ranking elevates pages with recognizable entities, recent updates, and scannable structure. Synthesis writes the answer.

Justification attaches sources that minimize risk (credible, current, attributable). Several large analyses confirm two truths: (1) Google rankings influence but don’t fully determine citations; (2) platforms don’t cite the same sources.

Perplexity overlaps most with SERPs; ChatGPT/Gemini diverge more. That’s why you optimize for extractability + entity trust, not just position.

What Signals Matter Most

You don’t need to guess. Across millions of observed AI citations, four classes of signals appear repeatedly.

The bullets below summarize what to implement and why each matters for selection. The table that follows gives you a quick deployment plan you can steal.

- Entity & Brand Trust: Recognizable organizations/authors, consistent NAP/identity, robust About/Contact pages. Safer to cite.

- Structure & Schema: Article/FAQ/HowTo schema, tight H2/H3 hierarchy, clean HTML. Makes content machine-parsable.

- Freshness & Recency: “Updated on” stamps, new data, changelogs. Reduces hallucination risk.

- Authority & Coverage: Links and mentions from credible publications; comprehensive topical coverage. AI treats these as reliability proxies.

Implementation Cheatsheet (Deploy This Week)

| Signal | What it Does for AI | Fast Move (48–72 hrs) | Proof/Support |

| Entity strength | Lowers “risk” to cite you | Unify org/author schema, expand About/Contact, consistent bios | CXL: entity trust is table stakes for being cited. |

| Structure & schema | Improves parsing/extraction | Add Article + FAQ schema to top pages; refactor headers | SEL study: structured pages show up more often. |

| Freshness | Favored in RAG ranking | Add visible update stamps; refresh stats quarterly | Writesonic: freshness correlates with citations. |

| Authority | Increases selection odds | Land 3–5 editorial links to the page; secure quotes in media | Ahrefs/SEL: authority correlates with selection. |

| Extractability | Enables sentence-level attribution | Add TL;DR, bullets, and a data table per section | Platform pattern analyses highlight quotable blocks. |

Do Rankings Matter?

Here’s the nuance most teams miss: strong rankings help, but they don’t guarantee citations.

Ahrefs found 76% of Google AI Overview citations come from top-10 pages yet across ChatGPT, Gemini, and Copilot the overlap drops to ~12% on average, while Perplexity is the outlier with much higher overlap.

Translation: SEO is necessary but not sufficient; you need AI-ready structure and signals to convert rank into citations.

If you rank but don’t get cited: your page likely lacks extractable blocks (tables, definitions, numbered steps) or visible freshness signals.

If you’re cited without ranking: you’ve nailed entity/structure. Double down and build links to lock in authority.

Callout: If your market is complex (SaaS, fintech, legal), institutional trust matters even more. Tighten governance (authors, claims, references) and build topical hubs.

Platform Bias is Real

Different engines privilege different corners of the web. Designing for one can leave you invisible elsewhere.

Use the table below to align your content assets with each engine’s tendencies, then build overlap assets (e.g., Wikipedia-style explainers, community threads, news mentions) as insurance.

| Platform | Common Citation Traits | What to Ship |

| ChatGPT (with browsing) | Leans to authoritative explainers, research hubs, recognized entities | Publish “definitive guides” with methodology sections and author credentials |

| Google AI Overviews | Heavier SERP alignment, freshness bias | Keep pages updated; map your H2s to query sub-intents |

| Perplexity | Prominent source cards, strong recency + SERP overlap | Create concise answer cards: bullets, tables, definitions per section |

| Copilot | Authority + Microsoft ecosystem tilt (observed) | Strengthen E-E-A-T signals; add FAQs with schema for direct pull quotes |

The “Extractability” Play

If a model can’t lift a clean snippet, it won’t risk citing you. Fix this fast with a repeatable section pattern. The steps below create citation-ready blocks that models prefer to quote or footnote.

- Lead with a 30–50 word answer. Clear, non-hedged, benefit-forward.

- Follow with a numbered process or table. One task per line, scannable.

- Add a mini-dataset. Original numbers or definitions the model can reference.

- Stamp with freshness. “Updated Sep 2025,” plus changelog if applicable.

- Wrap in schema. FAQ for Q&A, HowTo for processes, Article for the page.

What is vector recall in RAG?

Vector recall measures how many relevant documents your retriever surfaces from the index for a given query. Higher recall increases the chance your generator cites correct context.

Optimize recall before generation or you’ll ship elegant nonsense that never gets cited.

- Recall levers: embedding choice, index hygiene, chunking, query expansion

- Quick test: Measure recall@k on 20 queries; raise k, compare latency

- Schema: Add FAQ item “What is vector recall?” with <code>acceptedAnswer</code>

- Update: Reviewed Sep 30, 2025

Multiple studies now associate visible recency signals with higher selection probability, especially in Perplexity and AI Overviews.

Don’t just change the date; refresh data points, examples, and screenshots so the content actually improves. Track refresh cadence on a calendar and prioritize pages with decaying traffic or mentions.

Quick wins this week

- Refresh top 10 money pages with new stats and a dated “What changed” note.

- Add a 3–5 row table per section summarizing definitions, metrics, or examples.

- Convert your best paragraphs into bullets and FAQs with schema.

Authority Still Compounds Everything

Earning editorial links and mentions raises your baseline selection odds even when rankings diverge from citations.

Make link building boring and systematic: target industry roundups, data studies, and practitioner quotes. Pair that with sector hubs to win in product-led searches that AI assistants frequently answer with list-style sources.

Do This Next: 6 Tactical Moves That Get You Cited

You don’t get cited by chance if you engineer it. Optimize for retrieval, extraction, and safety so models pick you during “justify” mode.

Ship these moves this week and recheck your prompts across ChatGPT, Gemini, Copilot, and Perplexity.

- Reverse-engineer citations: Run your target prompt in each engine; copy winning structures, then go deeper.

- Design for extractability: Answer → bullets → compact table → FAQ; keep blocks quotable.

- Show real freshness: Update stats, screenshots, and add a dated changelog.

- Add schema + open bots: Article/FAQ/HowTo; allow major AI user agents in robots.txt.

- Publish original data: Small survey, benchmark, or dataset that others will reference.

- Harden authority: Earn editorial links, tighten author/org schema, fix Core Web Vitals.

A lean deployment gets you into candidate sets and raises selection odds even if you’re not #1 in Google. After shipping, rerun your prompts and log citation deltas monthly.

| Move | Fast Action (48–72 hrs) | Why It Works |

| Reverse-engineer | Collect cited URLs; mirror structure | Matches extraction patterns |

| Extractability | Add TL;DR, bullets, 3–5 row table | Clean, quotable snippets |

| Freshness | “Updated on” + changelog | Recency bias in retrieval |

| Schema + bots | FAQ/HowTo + allow GPT/Perplexity | Machine-parsable + retrievable |

| Original data | Publish a mini-dataset | Becomes the source |

| Authority | 3–5 editorial mentions | Safer entity to cite |

Re-run, record, iterate.

How To Know You’re Being Cited (Tools, Checks, KPIs)

If you don’t instrument this, you’ll guess. Log citations per prompt, per engine, and iterate monthly.

Use platform-visible source cards + studies on overlap/drift to decide where to push structure, freshness, and authority next.

Most AI platforms expose citations in UI elements (cards, footnotes, inline links). Start by defining a prompt set (10–20 high-intent queries), then benchmark who gets cited across ChatGPT (with browsing), Google AI Overviews, Perplexity, and Copilot.

Studies show AI Overviews cite top-10 pages ~76% of the time, while other engines diverge sharply so track per-engine performance, not just SERP rank. Expect “citation drift” month to month; plan a recurring review.

Monthly workflow: collect citations for your prompt set → compare winners vs. your page’s structure/freshness → ship updates (schema, tables, TL;DR, new data) → re-run prompts → log deltas → repeat.

This CRO-style loop compounds selection odds over 60–90 days.

KPI Table (track these weekly)

| KPI | What It Measures | Target/Benchmark | Why It Matters |

| Prompt Citation Rate (PCR) | % of prompts where your URL appears in sources (per engine) | 30–40% by day 90 (varies by niche) | Direct indicator of “justify” selection, independent of SERP rank. |

| Engine Overlap Index | # of engines citing the same URL | ≥2 engines for top assets | Mitigates platform variance; reduces reliance on one ecosystem. |

| Freshness Interval | Days since last meaningful update | ≤90 days on priority pages | Recency is favored in retrieval/AI Overviews. |

| Referring Domains to Asset | New editorial links to the page/dataset | +5 in 30–45 days post-launch | Authority is a selection tiebreaker across engines. |

| Extractability Score | Presence of TL;DR, bullets, 3–5 row table, FAQ schema | 100% on target pages | Increases snippet lift and safe attribution. |

Bullet-point checks (run each review):

- Source visibility: Are you cited in AI Overviews, Perplexity cards, or ChatGPT footnotes for each prompt? Screenshot and archive.

- Gap analysis: What do cited winners have that you don’t (original data, table formats, update stamps)? Mirror structure, add unique proof.

- Drift watch: Did your citation set change ≥30% vs. last month? If yes, prioritize freshness and overlap sources to stabilize.

Why this works: You’re aligning iteration with how engines actually select sources: AI Overviews lean on high-ranking, current pages; other engines cite more diversely and shift over time.

Measure by engine, fix extractability/freshness first, and authority second so your citation rate climbs predictably.

Conclusion

You don’t win AI citations by hoping, you win by engineering selection.

If your pages are structured, fresh, and attributable, they get pulled into answers across ChatGPT, Gemini, Copilot, and Perplexity.

Rankings help, but AI engines cite pages that look safest to justify. Build for that.

Wrap this into a monthly loop: reformat priority pages (answer → bullets → table → FAQ → changelog), add schema, refresh real data, and track prompt citation rate by engine.

Reinforce entity trust with sustainable authority plays like editorial links and credible mentions beat shortcuts.

FAQ – How To Get Cited in AI

How long until I see citations?

Typically 30–90 days. Engines need to crawl, index, and re-rank after your structural and freshness updates. Re-run your prompt set monthly and log deltas.

Do I need to rank top-3 in Google to be cited?

No. Rankings correlate but don’t guarantee. Structure, entity trust, and freshness can earn citations even when you sit outside the top 10.

What’s the fastest lever to pull?

Extractability. Add a 40–60 word answer, bullets, a 3–5 row table, FAQ schema, and a visible “Updated on” stamp to each target section.

Which schema types matter most?

Article, FAQPage, and HowTo. They clarify intent and make snippets machine-parsable. Validate them and keep headings clean (H2/H3 hierarchy).

Is original data required?

Not required but it’s a force multiplier. Publish a small dataset or benchmark. It attracts editorial links and makes you “source-worthy.”

Should I allow AI crawlers?

If citations are a goal, yes. Permit major AI user agents you’re comfortable with in robots.txt to be eligible for retrieval.

Why am I ranking but not cited?

Your page likely lacks extractable blocks or visible recency. Add TL;DRs, tables, FAQs, and update stale stats/screenshots.

How do links influence citations?

Editorial links increase perceived safety and authority. Build sector-relevant mentions to nudge your pages into the candidate set.