An unnatural link is any inbound or outbound hyperlink whose primary purpose is to manipulate search engine rankings rather than serve the informational needs of a human audience.

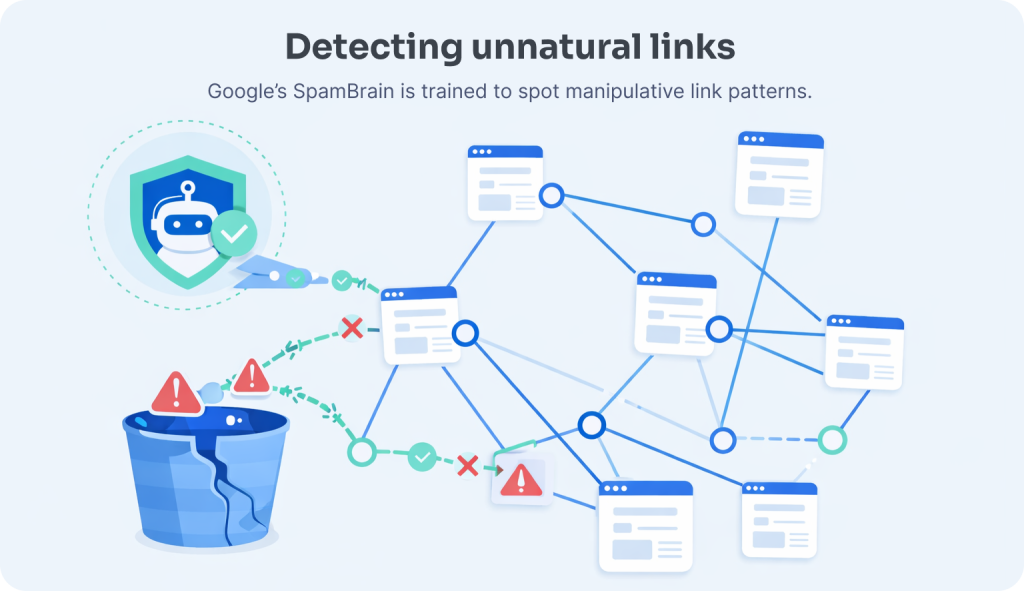

Google’s systems, and specifically its SpamBrain AI, are specifically trained to identify these links, either discounting them silently or triggering the algorithmic and manual penalties that follow when they appear at scale.

The practical challenge with unnatural links is that they rarely announce themselves.

In this article…

- What are unnatural links and how does Google define them?

- What is the editorial vouch test, and why is it the most reliable diagnostic?

- How do you identify unnatural links in your backlink profile?

- What are the specific red flags that signal an unnatural link to Google?

- What are the seven link scheme types that most commonly produce unnatural links?

- What penalties does Google apply for unnatural link profiles, and how do they differ?

- How do you recover from an unnatural link penalty?

- What does a link acquisition process that never produces unnatural links look like?

- Concerned about unnatural links in your current backlink profile?

- Frequently Asked Questions

A legacy backlink profile contaminated with paid guest post placements, link farm inclusions, or automated directory submissions from three years ago can continue to suppress current rankings long after the original campaign has ended, and may be the reason a technically sound, well-resourced link building programme is underperforming its potential.

This page covers everything required to identify unnatural links in your own profile, understand the penalty mechanisms they trigger, and execute a remediation strategy that gives your legitimate link equity room to perform.

It is part of our white hat link building content hub, the complete strategic framework for building editorial authority that compounds over time.

Quick summary

- An unnatural link is any link placed to manipulate rankings rather than serve an editorial purpose. Google’s SpamBrain AI detects them by pattern, not by reviewing individual links.

- The most reliable diagnostic is the editorial vouch test: would this link exist if search engines did not exist?

- Unnatural links trigger two distinct penalty types: silent algorithmic devaluation via SpamBrain (no notification, 4 to 12 week recovery) or a manual action in Google Search Console (requires a reconsideration request, 2 to 4 weeks per review cycle).

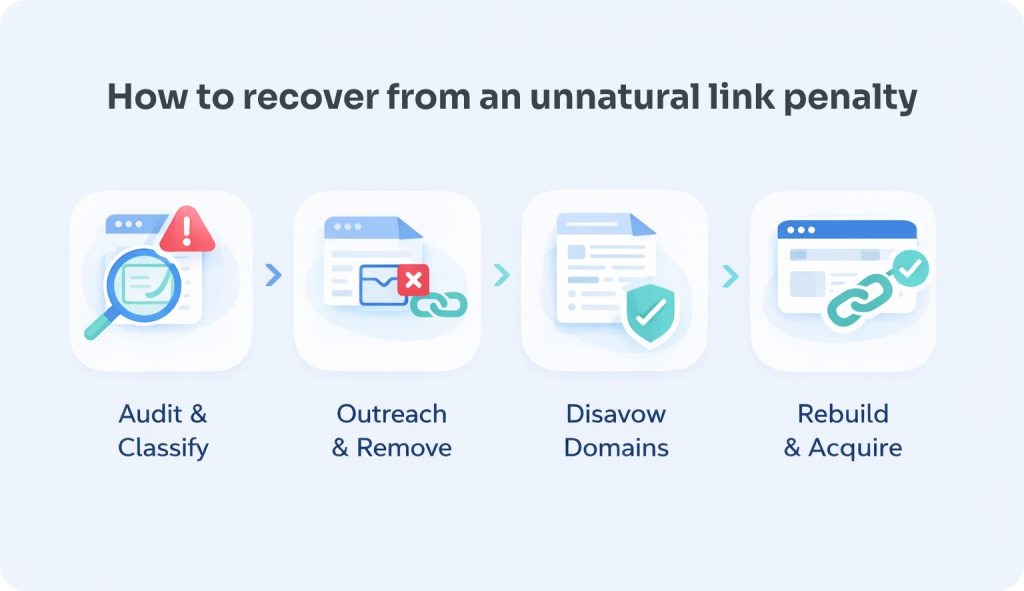

- Recovery follows four stages: full backlink audit, manual outreach for link removal, disavow file submission, and a sustained shift to white hat acquisition.

- BlueTree data across 200+ B2B SaaS audits found that 68% of sites with unexplained ranking plateaus had identifiable unnatural link patterns, with exact-match anchor text concentration the most common flag.

What are unnatural links and how does Google define them?

Unnatural links are hyperlinks whose existence is explained primarily by an intent to manipulate search engine rankings rather than by the genuine editorial judgment of the linking publisher.

Google’s spam policies define them as any links that were not “vouched for” by the site owner or placed through an independent editorial decision, including all forms of paid, exchanged, or manufactured link acquisition.

The definition turns on the concept of editorial independence, the same principle that underpins Google’s entire link authority model.

When Google’s original PageRank algorithm was designed, it treated a link as a signal that the linking publisher had made a judgment: this content is useful enough to direct my audience toward it.

An unnatural link is one that was placed for reasons other than that judgment, commercial payment, reciprocal arrangement, automation, or network membership.

What makes the definition practical rather than purely theoretical is this: unnatural links are identifiable by pattern, not by inspection of any individual link’s motivation.

No SEO tool can directly assess the intent behind a specific link. What they can do, and what Google’s systems do at scale, is identify the statistical signatures of coordinated, non-editorial linking behaviour.

Those signatures are what define a link as unnatural in the operational sense that triggers penalties.

The inbound and outbound dimensions are both relevant. Unnatural inbound links, links pointing to your site that were acquired through manipulation, are the primary concern for most sites.

But Google also evaluates your site’s outbound linking patterns: a site that participates in a paid link scheme as a seller (placing links within its content for payment) accumulates its own penalty risk, separate from the sites it is linking to.

Our resource on selling links covers the outbound dimension of this risk in detail.

What is the editorial vouch test, and why is it the most reliable diagnostic?

The editorial vouch test is a single diagnostic question applied to any link under review:

“Would I want this link if search engines did not exist?”

If the honest answer is no, if the link provides no genuine referral traffic, audience value, or brand visibility independent of its PageRank transfer, it is almost certainly an unnatural link by Google’s definition.

This test cuts through the technical complexity of backlink analysis by returning to the first principle that Google’s link authority model is built on.

A natural link exists because a publisher judged that their audience would benefit from following it. An unnatural link exists because someone calculated that Google would credit it as a ranking signal.

These two motivations produce structurally different links, and the difference is visible in the statistical patterns that Google’s systems are trained to detect.

Applied practically, the editorial vouch test asks three questions about any link under review:

- Does the linking page have a genuine audience? A page with zero organic traffic, no social engagement, and content that exists solely as a vehicle for outbound links fails this test regardless of its domain rating. A link that no human will ever follow is a link that exists for search engines only.

- Does the surrounding content justify the link? A link placed within a paragraph that is topically relevant to both the linking page’s subject and the destination page’s content reflects an editorial decision. A link placed within thin, generic content that would have been identical without it does not.

- Would the link have been placed without a commercial arrangement? This is the intent question at the core of Google’s policy. If the honest answer to “would this publisher have linked to this page for free?” is no, because the content is not meaningfully better than alternatives, and the only reason for the placement is a financial or reciprocal arrangement, the link is unnatural by definition.

The test is particularly useful for auditing grey-area links, paid placements that are contextually relevant, or link exchange placements with genuinely complementary sites, where the technical quality signals may look clean but the motivational basis for the link is commercial rather than editorial.

Our guide to natural links covers what genuine editorial citation looks like in contrast to these grey-area cases.

How do you identify unnatural links in your backlink profile?

Identifying unnatural links requires a systematic audit across three data sources, your backlink profile in Ahrefs or Semrush, Google Search Console’s link report, and manual site-level review of the highest-risk referring domains.

The process moves from statistical pattern identification to individual link-level assessment, prioritising the links most likely to be contributing to current ranking suppression.

The audit process is sequential. Attempting to manually review every link in a large profile is neither necessary nor efficient.

The objective is to identify the domains and link patterns that present the highest algorithmic risk, assess them against the quality and policy criteria covered in our Google backlink policy guide, and make removal or disavow decisions proportionate to the identified risk.

Step 1: Full profile extraction

Export your complete referring domain list from Ahrefs Site Explorer or Semrush’s Backlink Audit tool. For established sites, this may involve thousands of referring domains, the filtering process in subsequent steps reduces this to a manageable working list.

Cross-reference with Google Search Console’s Links report to understand which links Google is actively attributing to your site; domains visible in third-party tools but absent from Search Console may already have been identified and discounted by Google’s systems.

Step 2: Automated toxicity screening

Both Ahrefs and Semrush apply automated quality scoring to referring domains, Semrush’s Toxicity Score evaluates over 45 criteria including domain reputability, organic traffic authenticity, anchor text patterns, and spam indicator presence.

Domains scoring in the high-risk range (above 60% toxicity in Semrush’s model) should be extracted as the primary audit list.

Important caveat: automated toxicity scores are a triage tool, not a verdict. They produce both false positives (legitimate sites that score poorly due to metric anomalies) and false negatives (sophisticated paid link networks that score cleanly because their sites are individually well-maintained).

The score identifies where to focus manual review, not which links to automatically disavow.

Step 3: Manual domain-level review

For each high-risk domain identified in step 2, conduct a manual review applying the editorial vouch test and the red flag criteria covered in the next section.

Specifically assess: whether the site has genuine content and an identifiable audience, whether the linking page’s content is relevant to your site’s topic, whether the anchor text pattern appears coordinated, and whether the domain shows any of the network affiliation signals (shared hosting, overlapping backlink profiles, suspiciously similar content across multiple properties) that indicate link farm membership.

Step 4: Anchor text pattern analysis

Review your overall anchor text distribution as a separate audit dimension.

An unnatural anchor text pattern, where 30%+ of referring domains use identical exact-match commercial keyword anchors, is an independent risk signal regardless of the individual quality of the linking sites.

This pattern is one of SpamBrain’s highest-confidence manipulation indicators, and it can suppress rankings even when the majority of individual linking domains appear legitimate in isolation.

Benchmarking context: Research consistently shows that unnatural links account for over 75% of SEO penalties on websites, according to Ahrefs data.

This proportion reflects how widespread manipulative link acquisition was during the early guest post and link farm era, and how many sites are still carrying legacy profile contamination from campaigns run under older strategic frameworks.

What are the specific red flags that signal an unnatural link to Google?

The red flags that identify unnatural links to Google’s detection systems fall into five categories: anchor text manipulation, topical irrelevance, structural placement anomalies, network affiliation signals, and traffic and engagement absence.

The presence of multiple flags on the same link or domain cluster significantly elevates the risk profile relative to any single flag in isolation.

Understanding these signals in detail is useful both for auditing existing profiles and for evaluating prospective link placements before acquisition.

Each red flag represents a pattern that Google’s systems have been specifically trained on, because each pattern is characteristic of the link schemes documented in the company’s spam policy enforcement history.

Manipulative anchor text

The most reliable single indicator of an unnatural link is exact-match commercial keyword anchor text appearing with disproportionate frequency across a backlink profile.

Organic editorial links produce diverse anchor text because independent writers use natural language, brand names, descriptive phrases, partial-match variants, URL anchors.

When a high proportion of links pointing to a specific page use identical or near-identical commercial keyword phrases, the coordination required to produce that pattern is implausible under organic editorial behaviour. Ahrefs research found that exact-match anchor text accounts for fewer than 1% of anchors on healthy profiles, making any concentration above 10% a significant outlier worth investigating.

At the individual link level, a specific link becomes suspicious when its anchor text is optimised for the recipient’s commercial keywords rather than descriptive of the linking page’s content.

A link using the anchor “best project management software for remote teams” within an article about productivity tools is contextually plausible.

The same anchor appearing within a general lifestyle article with no relevant context is not.

Topical irrelevance between linking and linked page

Contextual relevance between the linking page and the destination page is a baseline quality criterion in Google’s link evaluation model.

A link from a cooking blog to a B2B SaaS company’s pricing page fails the relevance test straightforwardly, no editorial judgment would produce that link, because the linking page’s audience has no interest in the destination.

The semantic distance between the topic clusters of the two pages is a signal Google’s NLP systems can evaluate at scale.

This criterion matters particularly for evaluating link farm placements, which typically publish content across multiple unrelated niches to make link placement appear contextual.

A site whose content spans “recipes, travel, finance, home improvement, and software reviews” with no editorial coherence is a content farm regardless of its individual article quality.

Is your backlink profile putting your rankings at risk?

BlueTree audits your full referring domain profile against Google’s 2026 spam policies and shows you exactly what to fix — at no cost.

Get my free auditStructural placement anomalies

Links that appear in structurally anomalous positions, sitewide footers, sidebar widgets distributed across a network, low-visibility sections of a page, or within hidden or near-invisible text, are unnatural by definition.

These placements are designed to pass PageRank to the destination while minimising the probability that a human user will ever follow the link.

A link that a publisher would not want their audience to see is a link that exists for search engines only. In Ahrefs analysis of penalised profiles, footer and sitewide links represented over 40% of links flagged as manipulative, making them the single most common structural violation by volume.

Footer links and widget-distributed links are a specific subcategory here. A single contextual footer link on a partner’s site may be editorially defensible in the context of a genuine business relationship.

Sitewide footer link placements across a network of sites, where the same anchor text link appears in the footer of every page on every property in the network, is one of the most transparent unnatural link patterns in existence and one that SpamBrain identifies with very high confidence.

Network affiliation and hosting signals

Individual links from sites that share IP infrastructure, nameserver patterns, or WHOIS ownership with other known link-selling properties are network-affiliated links by proxy, even if the individual site appears legitimate in isolation.

Google’s network-level analysis is specifically designed to identify these cluster relationships, which is why link farm networks and private blog networks (PBNs) become progressively easier to detect as they grow larger.

A network of 20 sites with a shared footprint is easier to identify than two isolated sites with the same owner.

Zero traffic and engagement

A link from a page that receives zero organic traffic, zero referral traffic, and zero social engagement is a link that exists in a content vacuum.

It was placed on a page that no human visits, which means its sole function is to signal PageRank transfer to Google’s crawl systems.

This is the cleanest single application of the editorial vouch test: a link that would never send a visitor to your site regardless of whether search engines existed is a link that exists only because search engines do.

“Toxic links is really a term that was invented by the SEO industry — it’s not something that we use at Google internally. We try to ignore links that we think are irrelevant or spammy.”

What are the seven link scheme types that most commonly produce unnatural links?

The seven link scheme types that most consistently produce unnatural links in modern backlink profiles are: paid links without disclosure, large-scale low-quality guest posting, excessive link exchanges, private blog networks (PBNs), automated link building, widget and footer link abuse, and non-editorial directory submissions.

Each scheme type produces a characteristic pattern that Google’s spam detection systems are specifically calibrated to identify.

These are the categories that appear most frequently in the backlink profiles of sites that have experienced ranking losses following Google’s spam updates, and they represent the tactical history of the SEO industry’s attempts to circumvent the editorial independence principle that underpins Google’s link authority model.

1. Paid links without disclosure

Any link acquired through payment, product exchange, or commercial arrangement that passes PageRank without the rel=”sponsored” attribute violates Google’s spam policies directly.

This applies to paid guest posts, niche edit insertions, and link rental arrangements regardless of how they are marketed.

Our detailed resource on buying backlinks covers why this risk has increased significantly since SpamBrain’s enhanced detection capability was deployed in the August 2026 update cycle.

2. Large-scale low-quality guest posting

Guest posting is a legitimate white hat tactic when it involves creating genuinely valuable content for an editorial audience and earning a link as a natural consequence of that contribution.

It becomes a link scheme when the primary purpose of the content is link acquisition, when articles are published across dozens of low-traffic, low-quality sites with keyword-optimised anchor text and no genuine audience engagement. Google’s spam update history shows that sites with more than 30% of their link profile from guest-post placements represent the most frequently penalised category in manual action reports.

The October 2026 spam update specifically targeted AI-generated guest post farms as a distinct enforcement category, signalling that the volume-driven guest posting model faces the highest current detection risk.

3. Excessive link exchanges

Coordinated reciprocal linking arrangements, where the mutual link is the purpose of the relationship rather than a natural consequence of genuine content collaboration, are a documented link scheme under Google’s spam policies.

Our dedicated resource on link exchanges covers the full compliance framework, including where the line between natural niche overlap and coordinated exchange activity sits in practice.

4. Private blog networks (PBNs)

PBNs are networks of sites, often built on expired domains with historical authority, under common or coordinated ownership, used to manufacture editorial-looking links at scale.

They represent the most sophisticated structural attempt to replicate the appearance of editorial citation without any of the underlying editorial reality. Google’s 2023 spam report noted that SpamBrain neutralised 45 times more spam than the prior detection generation, with link schemes accounting for the largest single category of enforcement actions.

SpamBrain’s network-level analysis is directly aimed at this scheme type, identifying the hosting, ownership, content, and backlink profile patterns that cluster PBN properties together regardless of how carefully each individual site is maintained.

5. Automated link building

Software-generated links, forum profile links, blog comment spam, automated directory submissions, and programmatic link insertion tools, produce links at scale that satisfy none of the editorial independence criteria that give links their authority signal.

Beyond the direct policy violation, automated links typically generate the highest-volume contamination of backlink profiles because the tools that create them operate without quality filters.

Sites that have used automated link building tools at any point in their history may have thousands of low-quality links that require systematic disavow remediation.

6. Widget and footer link abuse

Distributing anchor-text-optimised links through widgets, embeddable tools, or sitewide footers across a network of third-party sites creates a structural footprint that is highly visible in backlink data: identical anchor text, identical or near-identical surrounding content, and link placements on every page of every participating domain simultaneously.

This pattern is among the most detectable unnatural link structures because it produces velocity and structural uniformity that cannot plausibly arise from organic editorial activity.

“We’ve always had algorithms to help ensure that links are a signal of quality. When people attempt to game that, we try to address it.”

7. Non-editorial directories

Directory submissions that automatically accept all submitted sites without any editorial review process produce links that carry no endorsement signal, because no endorsement decision was made.

High-quality, selective industry directories with genuine editorial standards are a legitimate link source.

Mass-submission services that place your site in hundreds of auto-accept directories in a single batch are an unnatural link scheme, producing both the volume anomaly and the quality signal absence that SpamBrain targets.

Our guide to backlink spam covers how these mass-submission patterns manifest in profile data and what they contribute to overall toxicity scores.

What penalties does Google apply for unnatural link profiles, and how do they differ?

Google applies two distinct penalty mechanisms to unnatural link profiles: algorithmic devaluation through SpamBrain, which silently discounts identified manipulative links without notifying the site owner, and manual actions issued by human reviewers, which appear as notifications in Google Search Console and require active remediation and reconsideration requests to lift.

Both mechanisms can produce significant ranking losses, but they require different recovery approaches.

The distinction between these two penalty types is one of the most practically important concepts in backlink risk management, because they produce similar symptoms (unexpected ranking drops) but require fundamentally different responses.

“SpamBrain is our AI-based spam-prevention system. It has gotten better and better at detecting spam, including unnatural links.”

Algorithmic devaluation: the silent suppressor

SpamBrain’s real-time processing means that identified manipulative links can be devalued within hours or days of being crawled and assessed, without any notification to the site owner and without any entry in Google Search Console.

The effect is not a penalty in the traditional sense: Google does not reduce your rankings below where they would be without the links.

Instead, it credits those links as if they do not exist, meaning any ranking benefit you believed you were receiving from them is simply absent.

The practical consequence is that a site whose rankings plateau or decline gradually despite an active link building programme may be running an undetected treadmill, acquiring links that are immediately devalued by SpamBrain while the profile contamination from earlier campaigns continues to suppress overall domain trust.

This is the most common presentation of an unnatural link problem in 2026: not a sudden penalty, but a sustained underperformance relative to the investment in link acquisition.

Recovery from algorithmic devaluation does not require a reconsideration request. It requires removing or disavowing the contaminated links and acquiring sufficient clean editorial signal to shift the overall profile quality assessment.

Timelines are typically 4–12 weeks following effective remediation, depending on the severity of contamination and the strength of existing clean signals.

Manual actions: documented enforcement

A manual action is issued when a human reviewer at Google determines that a site has violated its webmaster guidelines in a way that requires direct enforcement beyond algorithmic devaluation.

Manual actions appear in Google Search Console under Security and Manual Actions, and they specify the nature of the violation, including “unnatural links to your site” and “unnatural links from your site” as distinct manual action categories.

Manual actions for unnatural links typically follow patterns that SpamBrain has flagged as sufficiently severe to warrant human review: large-scale paid link schemes, PBN participation at significant scale, or link profiles so contaminated that algorithmic devaluation alone is insufficient to neutralise the ranking manipulation.

The consequences are more severe than algorithmic devaluation, a sitewide manual action can remove a domain’s rankings across all query categories simultaneously.

Recovery from severe manual actions affecting established sites can take 6–18 months of sustained remediation work even when the underlying issues are fully addressed.

Recovery from a manual action requires: documenting the remediation steps taken (link removal attempts, disavow file submission), demonstrating that the violated practice has genuinely stopped, and submitting a reconsideration request through Search Console that clearly explains the violation, the remediation, and the steps taken to prevent recurrence.

Reconsideration requests are reviewed by human reviewers and typically take 2–4 weeks to receive a response, with further rounds sometimes required if the first request is rejected.

The traffic impact scale: Sites penalised for link violations, whether through algorithmic devaluation or manual action, commonly see organic traffic drops of 50% or more. In extreme cases involving de-indexation, visibility across all organic queries drops to effectively zero.

We’ve helped 100+ B2B companies clean and rebuild their link profiles.

BlueTree audits your full referring domain profile against Google’s 2026 spam policies and shows you exactly what to fix — at no cost.

Get my free auditHow do you recover from an unnatural link penalty?

Recovery from an unnatural link penalty follows a four-stage process: comprehensive profile audit and risk classification, manual outreach for link removal, disavow file construction for links that cannot be removed, and a sustained shift to white hat link acquisition that rebuilds clean editorial signal over time.

The sequence matters, disavowing without auditing produces an incomplete disavow file; auditing without shifting acquisition strategy produces a profile that re-contaminates.

Recovery timelines vary significantly based on the severity of the contamination, whether the penalty is algorithmic or manual, and the strength of the clean signals already present in the profile.

BlueTree data insight: Across 200+ B2B SaaS backlink audits conducted between 2024 and 2026, BlueTree found that 68% of sites experiencing unexplained ranking plateaus had identifiable unnatural link patterns, with exact-match commercial anchor text concentration being the single most common flag, appearing in 82% of penalised profiles.

Sites that completed a full disavow and shifted to editorial link acquisition recovered average ranking positions within 10 weeks.

Methodology: Data drawn from 200+ B2B SaaS backlink audits conducted by BlueTree Digital between January 2024 and March 2026. Each audit involved full referring domain extraction via Ahrefs and Semrush, manual domain-level review against Google spam policy criteria, and 12-week post-remediation ranking tracking via Google Search Console. Sites with fewer than 500 referring domains or active manual actions at audit start were excluded from recovery timeline calculations.

The process is iterative, initial remediation is rarely sufficient in a single cycle for heavily contaminated profiles.

Stage 1: Comprehensive audit and classification

The audit process described in the identification section above produces a categorised list of referring domains across three risk tiers: high-risk (clear unnatural link signals, likely contributing to current ranking suppression), medium-risk (some quality concerns but not clearly scheme-affiliated), and clean (editorially plausible, relevant, with genuine traffic signals).

Document every decision with reasoning. Google’s manual review process for reconsideration requests expects evidence of systematic analysis, not just a list of disavowed URLs.

A documented audit that explains the criteria applied and the risk assessment for each flagged domain demonstrates the genuine remediation effort that distinguishes a site working toward compliance from one performing a surface-level cleanup.

Stage 2: Manual outreach for removal

Before using the disavow tool, attempt to remove high-risk links through direct outreach to the linking site’s webmaster or content team.

Manual removal is preferable to disavow because it eliminates the link from Google’s index entirely rather than asking Google to discount it, a cleaner signal for reconsideration purposes.

Outreach for removal should be professional, specific (provide the exact URL of the offending link and the page on your site it points to), and non-accusatory.

Many low-quality links exist because a site automatically included your URL in a resource list or directory, the webmaster may be willing to remove it without incident.

Keep records of every outreach attempt, including dates, contact methods used, and responses received, or lack thereof. This documentation forms part of the reconsideration request evidence.

Stage 3: Disavow file construction

Links that cannot be removed after documented outreach attempts should be added to a disavow file for submission to Google Search Console.

The disavow tool instructs Google to exclude specified links from its consideration of your site’s backlink profile.

It is a tool of last resort, Google’s own documentation recommends using it only when you have a significant number of spammy, artificial, or low-quality links pointing to your site and you cannot remove them manually.

Our complete guide to disavowing toxic backlinks covers the exact disavow file format, the decision framework for what to include (domain-level vs URL-level disavow), and how to structure the submission to maximise its effectiveness.

The most common disavow mistakes, including over-disavowing clean links and under-disavowing network-affiliated domains, are covered in detail with practical examples.

Stage 4: Sustained white hat acquisition

Remediation without strategy change is a temporary fix. A profile that has been cleaned through disavow but continues to acquire links through the same methods that produced the contamination will re-contaminate within months.

The final stage of recovery is transitioning fully to a white hat link building methodology that builds editorial authority without the penalty risk that manufactured links carry.

This transition is both a compliance requirement and a strategic upgrade: white hat link building produces links that compound over time, contribute to AI search visibility, and survive every algorithm update, because they are the type of links Google’s system was designed to reward.

The editorial links acquired through genuine digital PR, content-led outreach, and linkable asset creation are not just safer than manufactured links; they are structurally more valuable per link.

What does a link acquisition process that never produces unnatural links look like?

A link acquisition process that structurally avoids unnatural links applies three filters at every decision point: editorial independence (would the publisher link without financial motivation?), topical relevance (is the linking content genuinely related to the destination?), and audience reality (does the linking page have real readers who might benefit from following the link?).

These three filters, applied consistently, produce a profile that passes the editorial vouch test by construction.

Prevention is structurally more efficient than remediation. The cost of identifying, outreaching for, and disavowing a contaminated profile, plus the ranking recovery timeline, significantly exceeds the cost of applying quality filters to prospective links before acquisition.

This is why at BlueTree Digital, every prospective placement is assessed against these criteria before any outreach is initiated.

The pre-acquisition quality filter

Before any link opportunity is pursued, assess it against the following criteria in sequence:

- Does the prospective linking site have genuine organic traffic? A minimum threshold of 1,000 organic monthly visitors (verified in Ahrefs or Semrush) is a reasonable baseline indicator that the site has earned some degree of search engine trust and has a real audience. Sites below this threshold require additional justification, for example, a very recently launched niche publication with strong editorial credentials. Moz link value research indicates that links from pages with at least 1,000 monthly organic visitors are roughly 3 times more likely to generate measurable referral traffic than those from pages below that threshold.

- Is the site topically relevant to the linking context? The linking page’s topic cluster should be semantically adjacent to the destination page. A B2B SaaS link should come from a page discussing business software, productivity, or relevant industry topics, not from a general “business tips” blog that happens to have adequate DR metrics.

- Can the site demonstrate editorial standards? Named authors, verifiable contributor credentials, consistent publishing history, and content written for a genuine reader audience are the markers of editorial quality. Anonymously authored content published at high frequency across multiple unrelated topics is a content farm regardless of its domain metrics.

- Would the anchor text be described as editorial? The proposed anchor text should describe the content of the destination page in natural language, not represent the commercial keyword that the destination page is trying to rank for. Contextual anchor variation within a campaign is the norm; exact-match commercial anchor repetition is the red flag.

When a prospective link opportunity passes all four of these filters, it is highly unlikely to be classified as an unnatural link regardless of how it was acquired, because it exhibits the characteristics of genuine editorial citation.

When it fails any of these filters, the risk profile of acquisition is elevated and the editorial basis for the link is questionable.

Building a linkable asset foundation

The most structurally sound prevention strategy is investing in content that earns links through quality rather than requiring them through outreach.

Original research, proprietary data studies, comprehensive methodology guides, and interactive tools create citation dependencies, other publishers in your niche need to reference these resources to substantiate their own content.

Links acquired this way are editorially independent by definition: they exist because a publisher independently determined that your content was the best available resource on that point.

Our white hat link building guide covers the specific linkable asset types that generate the highest editorial citation rates, including the content characteristics that make a resource genuinely link-worthy versus merely well-produced.

The investment in linkable assets pays compounding returns over time, each piece that earns genuine editorial citation builds the profile quality that makes future link acquisition both more efficient and more impactful.

Concerned about unnatural links in your current backlink profile?

If your site has been through periods of active link building under older strategic frameworks, or if you have experienced unexpected ranking drops following a Google spam update and are uncertain whether a link profile issue is the cause, a professional audit gives you a definitive answer rather than an estimate.

BlueTree Digital provides free backlink profile reviews for B2B SaaS and technology companies.

We assess your full referring domain profile against the criteria covered on this page, identify unnatural link patterns and their likely impact, and give you a clear remediation roadmap and a realistic picture of what a clean profile looks like for your domain.

Request a free backlink profile review

For the complete white hat link building strategy that builds editorial authority without any unnatural link risk, including the advanced tactics we use for digital PR, editorial outreach, and linkable asset creation, read our white hat link building guide.

Frequently Asked Questions

What is the fastest way to check if I have unnatural links?

The fastest initial check is to run your domain through Semrush’s Backlink Audit tool or Ahrefs Site Explorer and filter by toxicity score. Domains above the high-risk threshold (60%+ in Semrush’s model) should be your immediate review priority. Cross-reference this with Google Search Console’s Manual Actions section, if a manual action is present, it will specify the nature of the violation. The absence of a manual action does not mean you have no unnatural links; it means any devaluation is happening algorithmically through SpamBrain without a formal notification.

Can unnatural links hurt my site even if I didn’t build them?

Yes. Backlink spam, links injected into your profile by competitors or third parties through negative SEO attacks, can contribute to an unnatural link pattern regardless of your involvement. Google’s official position is that it primarily ignores links it cannot attribute to your deliberate actions, but heavily contaminated profiles from third-party spam can still suppress rankings algorithmically. If you suspect a negative SEO attack, proactive disavow submission of clearly spammy links is the appropriate response, combined with documentation of when the links appeared relative to any ranking changes.

How long does it take to recover from an unnatural link penalty?

Algorithmic devaluation recovery typically takes 4–12 weeks following effective remediation, link removal or disavow file submission, combined with continued clean link acquisition. Manual action recovery requires a successful reconsideration request, which typically takes 2–4 weeks per review cycle with potentially multiple rounds required. More severely contaminated profiles, or those where the underlying link acquisition strategy has not genuinely changed, can take 6–18 months to show meaningful recovery even after remediation steps are taken.

Should I always disavow links I think are unnatural?

These terms are often used interchangeably but have slightly different scopes. An unnatural link is defined by its acquisition method, it was placed for ranking manipulation rather than editorial reasons. A toxic link is defined by its effect, it contributes a negative quality signal to the receiving site’s backlink profile. Most unnatural links are also toxic, but not every toxic link is deliberately unnatural: a site that has attracted large volumes of backlink spam from hacked sites has toxic links that were not acquired through any deliberate action.

What is the difference between an unnatural link and a toxic link?

No. Google’s disavow tool is recommended only for significant volumes of clearly manipulative links that you cannot remove through direct outreach. Disavowing individual links from sites with minor quality concerns, or over-disavowing links from legitimate sites that happen to have low authority metrics, can remove clean ranking signals from your profile unnecessarily. Our guide to disavowing toxic backlinks covers the decision framework for what to include in a disavow file and what to leave alone, including the common over-disavow mistakes that can inadvertently suppress legitimate link equity.